What is "multiple +12V rails", really?

In most cases, multiple +12V rails are actually just a single +12V source just split up into multiple +12V outputs each with a limited output capability. This is what "OCP" is. "Over Current Protection". It's a protection in the PSU's housekeeping IC that shuts off the PSU when a pre-defined amount of current is exceeded.

There are a few units that actually have two +12V sources, but these are typically very high output power supplies. And in most cases these multiple +12V outputs are split up again to form a total of four, five or six +12V rails for even better safety. To be clear: These REAL multiple +12V rail units are very rare and are all 1000W+ units (the original Corsair HX1000, Enermax Galaxy, Topower/Tagan "Dual Engine", Thermaltake Tough Power 1000W & 1200W, for example.)

The original HX1000 is LITERALLY two 500W PSUs crammed into one housing.

So why do they do they split up +12V rails??

Safety. It's done for the same reason that there's more than one circuit breaker in your house's distribution panel. The goal is to limit the current through each wire to what that wire can carry without getting dangerously hot.

Short circuit protection only works if there's minimal to no resistance in the short (like two wires touching or a hot lead touching a ground like the chassis wall, etc.) If the short occurs on a PCB, in a motor, etc. the resistance in this circuit will typically NOT trip short circuit protection. What does happen is the short essentially creates a load. Without an OCP the load just increases and increases until the wire heats up and the insulation melts off and there's a molten pile of flaming plastic at the bottom of the chassis. This is why rails are split up and "capped off" in most power supplies; there is a safety concern.

Is it true that some PSU's that claim to be multiple +12V rails don't have the +12V rail split at all?

Yes, this is true. But it's the exception and not the norm. It's typically seen in Seasonic S12/M12 based units (like the Corsair HX520 and HX620 and Antec True Power Trio.) It's actually cheaper to make a single +12V rail PSU because you forego all of the components used in sensing current, splitting up and limiting each rail and this may be one reason some OEM's will not split the rails, but say they are split. Some system builders adhere very closely to ATX12V specification for liability reasons, so a company that wants to get that business but also save money and reduce R&D costs will often "fib" and say the PSU has it's +12V split when it does not.

Why don't those PSU companies get in trouble? Because Intel actually lifted the split +12V rail requirement from the ATX spec. It's now "recommended" and not "required".

So does splitting the +12V rails provide "cleaner and more stable voltages" like I've been told in the past?

It is true that marketing folks have told us that multiple +12V rails provides "cleaner and more stable voltages", but this is a complete falsehood. Quite frankly, they use this explaination because "offers stability and cleaner power" sounds much more palletable than "won't necessarily catch fire". Like I said before, typically there is only one +12V source and there is typically no additional filtering stage added when the rails are split off that makes the rails any more stable or cleaner than if they weren't split at all.

Why do some people FUD that single is better?

Because there are a few examples of companies that have produced power supplies with four +12V rails, something that in theory should provide MORE than ample power to a high end gaming rig, and screwed up. These PSU companies followed EPS12V specifications, which is for servers, not "gamers". they put ALL of the PCIe connectors on one of the +12V rails instead of adding an extra rail and splitting up the PCIe connectors across those. By putting all of the PCIe on a single +12V rail with a relatively low OCP, the +12V rail was easily overloaded and caused the PSU to shut down during intese games. The earliest example of this was the PC Power and Cooling Turbo-Cool 1000W. Instead of correcting the problem, they just did away with the splitting of +12V rails altogether and started a marketing campaign exclaiming that "single +12V rail is better!"

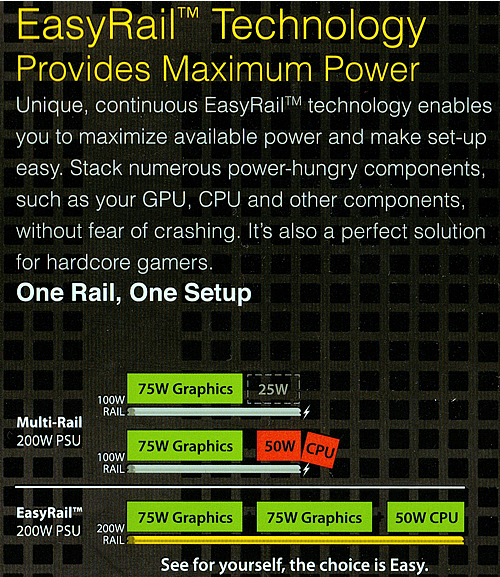

This marketing quickly went off the rails and actually ended up confusing customers, which lead me to write this article. A prime example of marketing going off the rails is XFX's marketing of "Easy Rail" and claiming that "unused power" on a rail is "lost and not able to be used" and "causes crashing".

|

|

Multiple +12V rail "enthusiast" PSU's today have a +12V rail just for PCIe connectors or may even split four or six PCIe connectors up across two different +12V rails. The rails themselves are capable of far more power output than any PCIe graphics card would ever need. In fact, Nvidia SLI certification these days REQUIRE that the PCIe connectors be on their own +12V rail to avoid any problems from running high end graphics cards on split +12V rail PSU's.

As I stated earlier, there's less components and less engineering to make a PSU that DOES NOT have the +12V rail split up, so it's cheaper to manufacturer (about $1.50 less on the BOM, $2 to $3 at retail) and typically this cost savings is NOT handed down to the consumer, so it actually behooves marketing to convince you that you only need single +12V rails.

But some people claim they can overclock better, etc. with a single +12V rail PSU

B.S. It's a placebo effect. The reality is that their previous PSU was defective or just wasn't as good as their current unit. If the old PSU was a cheap-o unit with four +12V rails and the new one is a PCP&C with one +12V rail, the new one isn't overclocking better because it's a single +12V rail unit. It's overclocking better because the old PSU was crap. It's only coincidental if the old PSU had multiple +12V rails and the current one has just one.

The only "problem" the occurs with multiple +12V rails is that when a +12V rail is overloaded (for example: more than 20A is being demanded from a rail set to only deliver up to 20A), the PSU shuts down. Since there are no "limits" on single +12V rail PSU's, you can not overload the rails and cause them to shut down..... unless you're using a "too-small" PSU in the first place. Single +12V rails do not have better voltage regulation, do not have better ripple filtering, etc. unless the PSU is better to begin with.

So there are no disadvantages to using a PSU with multiple +12V rails?

No! I wouldn't say that at all. To illustrate potential problems, I'll use these two examples:

Example 1:

An FSP Epsilon 700W has ample power for any SLI rig out there, right? But the unit only comes with two PCIe connectors. The two PCIe connectors on the unit are each on their own +12V rail. Each of these rails provides up to 18A which is almost three times more than what a 6-pin PCIe power connector is designed to deliver! What if I want to run a pair of GTX cards? It would have been ideal if they could put two PCIe connectors on each of those rails instead of just one, but instead those with GTX SLI are forced to use Molex to PCIe adapters. Here comes the problem: When you use the Molex to PCIe adapters, you have now added the load from graphics cards onto the rail that's also supplying power to all of your hard drives, optical drives, fans, CCFL's, water pump.. you name it. Suddenly, during a game, the PC shuts down completely.

Solution: To my knowledge, there aren't one-to-two PCIe adapters. Ideally, you'd want to open that PSU up and solder down another pair of PCIe connectors to the rails the existing PCIe connectors are on, but alas... that is not practical. So even if your PSU has MORE than ample power for your next graphics cards upgrade, if it doesn't come with all of the appropriate connectors, it's time to buy another power supply.

Example 2:

Thermo-Electric Coolers (TEC's, aka "Peltiers") take a lot of power and are typically powered by Molex power connectors. I, for one, prefer to run TEC's on their own power supply. But that's not always an option. If you had a power supply with split +12V rails and powered your TEC's with Molexes, you would be putting your TEC's on the same +12V rail as the hard drives, optical drives, fans, CCFL's, water pump.. you name it, just as you did with the Molex to PCIe adapters. The power supply could, essentially, shut down on you in the middle of using it. A power supply with a single, non-split, +12V rail would not have any kind of limit as to how much power is delivered to any particular group of connectors, so one could essentially run several TEC's off of Molex power connectors and not experience any problems if one had a single +12V rail PSU.

Typical multiple +12V rail configurations:

- 2 x 12V rails

- Original ATX12V specification's division of +12V rails.

- One rail to the CPU, one rail to everything else.

- VERY old school since it's very likely that "everything else" may include a graphics card that requires a PCIe connector.

- Typically only seen on PSU's < 600W.

- 3 x 12V rails

- A "modified" ATX12V specification that takes into consideration PCIe power connectors.

- One rail to the CPU, one rail to everything else but the PCIe connectors and a third rail just for PCIe connectors.

- Works perfectly for SLI, but not good for PC's requiring four PCIe connectors.

- 4 x 12V rails (EPS12V style)

- Originally implemented in EPS12V specification

- Because typical application meant deployment in dual processor machine, two +12V rails went to CPU cores via the 8-pin CPU power connector.

- "Everything else" is typically split up between two other +12V rails. Sometimes 24-pin's two +12V would share with SATA and Molex would go on fourth rail.

- Not really good for high end SLI because a graphics card always has to share with something.

- Currently Nvidia will NOT SLI certify PSU's using this layout because they now require PCIe connectors to get their own rail.

- In the non-server, enthusiast/gaming market we don't see this anymore. The "mistake" of implementing this layout was only done initially by two or three PSU companies in PSU's between 600W and 850W and only for about a year's time.

- 4 x 12V rails (Most common arrangement for "enthusiast" PC)

- A "modified" ATX12V, very much like 3 x 12V rails except the two, four or even six PCIe power connectors are split up across the additional two +12V rails.

- If the PSU supports 8-pin PCIe or has three PCIe power connectors on each of the +12V rails, it's not uncommon for their +12V rail to support a good deal more than just 20A.

- This is most common in 700W to 1000W power supplies, although for 800W and up power supplies it's not unusual to see +12V ratings greater than 20A per rail.

- 5 x 12V rails

- This is very much what one could call an EPS12V/ATX12V hybrid.

- Dual processors still each get their own rail, but so do the PCIe power connectors.

- This can typically be found in 850W to 1000W power supplies.

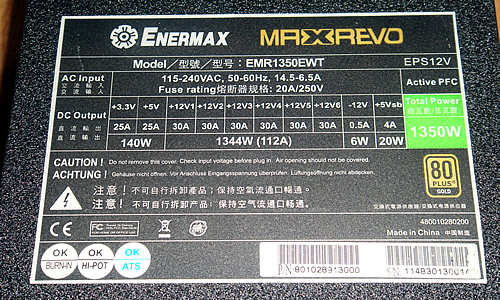

- 6 x 12V rails

- This is the mack daddy because it satisfies EPS12V specifications AND four or six PCIe power connectors without having to exceed 20A on any +12V rail

- Two +12V rails are dedicated to CPU cores just like an EPS12V power supply.

- 24-pin's +12V, SATA, Molex, etc. are split up across two more +12V rails.

- PCIe power connectors are split up across the last two +12V rails.

- This is typically only seen in 1000W and up power supplies.

This Enermax MaxRevo 1350W has SIX +12V rails.

Ok... What's the bottom line?

The bottom line is, for 99% of the folks out there single vs. multiple +12V rails is a NON ISSUE. It's something that has been hyped up by marketing folks on BOTH SIDES of the fence. Too often we see mis-prioritized requests for PSU advice: Asking "what single +12V rail PSU should I get" when the person isn't even running SLI! Unless you're running a plethora of Peltiers in your machine, it should be a non-issue assuming that the PSU has all of the connectors your machine requires and there are no need for "splitters" (see Example 1 in the previous bullet point).

The criteria for buying a PSU should be:

- Does the PSU provide enough power for my machine?

- Does the PSU have all of the connectors I require (6-pin for high end PCIe, two 6-pin, four 6-pin or even the newer 8-pin PCIe connector)?

- Does the PSU have all of the necessary safety certifications? UL or cTÜVus, CB, FCC, etc.

- Is the PSU rated at continuous or peak?

- What temperature is the PSU rated at? Room (25° to 30°C) or actual operating temperature (40°C to 50°C)

- If room temperature, what's the derating curve? As a PSU runs hotter, it's capability to put out power is diminished. If no de-rate can be found, assume that a PSU rated at room temperature may only be able to put out around 75% of it's rated capability once installed in a PC.

- Does the unit have power factor correction? If it has a red switch on the back to select voltage, it does NOT and shouldl be avoided.

- Is the unit efficient? The more efficient the PSU is, the less power it uses from the wall and the less heat it generates because AC not converted to DC is lost as heat.

- Is the unit quiet?

- Is the unit modular?

- Am I paying extra for bling? You don't get an RGB fan for free. So if it doesn't cost more than the next PSU, you're probably getting a poorer quality PSU.

- Do I want bling? If your PSU is covered by a shroud, why pay extra for RGB?

And for those who like videos, here's one I shot with Tek Syndicate back in 2015: